A (biased) introduction to Knowledge Compilation

Florent Capelli

Université d’Artois - CRIL

Back to School Conference

October 06, 2023

ADVERTISEMENT

CRIL

Computer science Research Institute of Lens

Specialized in AI, from GOFAI to ML.

http://www.cril.univ-artois.fr/

Interns, PhD students welcome!

Reach out at capelli@cril.fr.

Lens is roughly the same distance from Paris Gare du Nord than Saclay in the SNCF topology.

Knowledge: representation and reasoning

Knowledge in AI

Knowledge is a central notion for AI:

- Formalizing knowledge, from Antiquity and before: birth of formal logic

- Volatile notion which escapes classical

logic:

- Natural language rarely express facts of the form

- Knowledges may contradict themselves

- Modeling beliefs and fuzzy facts

Rich fields of research:

- Many forms of Logic : modal, epistemic, conditional

- Reasoning with ontologies, under uncertainties, contradictory beliefs

(Propositional) Knowledge Bases

Data + Knowledge = Knowledge Base

Propositional Knowledge Bases:

- Set of Propositions: “The model is TWINGO”, “The color is GOLD”

- Knowledges encoded as propositional formulas:

- Knowledge base can be seen as

- e.g.: because it does not contain !

Reasoning on Knowledge Bases

Reasoning tasks of various nature:

- Decision: can we construct a golden Twingo with engine X112-Y?

- Optimization: what is the cheapest golden Twingo we can construct?

- Sampling: sample a car model following market previsions?

- Aggregation: what is the expected benefit from selling a golden Twingo?

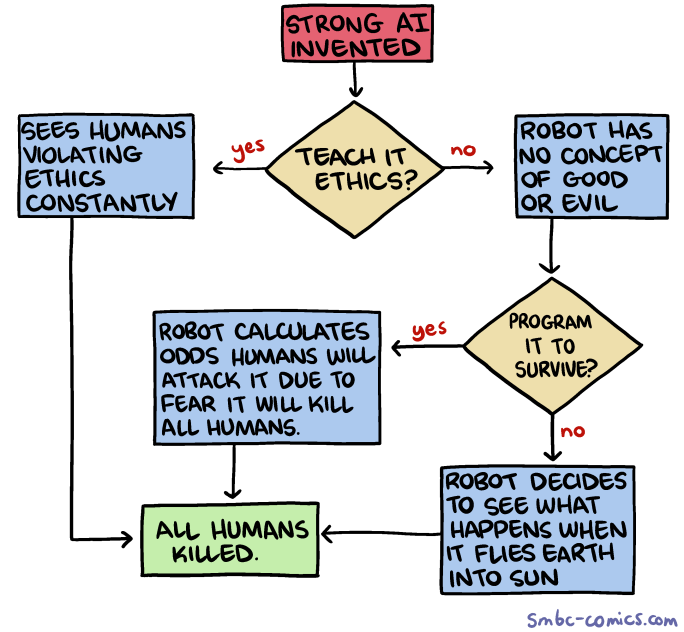

Addressing the elephant on the network

This talk is about a specific topic tagged as AI but which has almost nothing to do with ChatGPT.

While ChatGPT represents knowledge and reasons on it in a way, it is merely an illusion:

- No formal guarantees of the soundness of the reasoning.

- Sampling, counting are intrisically computationally expensive problems. This is witnessed theoretically and in practice. Need for dedicated tools.

Representing Knowledge Bases

Knowledge base for a finite set of propositions .

Implicit representation

- Sets of “true” formulas on .

- Natural representation: the one usually written down by humans

- Deciding whether is hard

Explicit representation

- List every .

- Knowledge flatten down and hence easy to access

- HUDGE

Looking for tradeoffs

One Minute to Cool Down

60s

Wrap up:

- Knowledge is hard to represent and reason with

- Today: propositional knowledge bases

- Goal: Find good tradeoffs between concise representations and tractability

Knowledge Representation Languages

Representing Boolean functions

A Propositional Knowledge Base is a subset of .

This is a Boolean function:

How can we represent Boolean functions?

CNF Formulas

where is a literal or for some variable .

Examples:

The SAT Problem

CNF formulas are extremely simple yet can encode many interesting problems.

Cook, Levin, 1971: The problem SAT of deciding whether a CNF formula is satisfiable is NP-complete.

Valiant 1979: The problem #SAT of counting the satisfying assignment of a CNF formula is #P-complete.

- Very unlikely that efficient algorithms exists for solving SAT / #SAT

- Thriving community nevertheless addresses this problem in practice

- SAT Solver very efficient in many applications

Relevance of CNF formulas

- Natural encoding: succinctly encodes many problems, witnessed by the many existing industrial benchmarks.

- Intractable for reasoning and counting

Not very interesting for reasoning tasks.

Circuit Based Representations

Research has focused on factorized representation.

An example

Data structure based on decision nodes to represent “ is even”.

Path for , and is accepting.

OBDDs

Previous data structure are Ordered Binary Decision Diagrams.

- Directed Acyclic graphs with one source

- Sinks are labeled by or

- Internal nodes are decision nodes on a variable in

- Variables tested in order.

Row of 1

Let’s draw an OBDD that detects whether a matrix with has a row full of .

Row of 1 (Continued)

How many -matrices have a row full of ones?

- Case Analysis:

: matrices

: matrices

matrices

Total:

Tractability of OBDDs

This idea can be generalized to any OBDDs:

Let be a function computed by an OBDD having edges. We can compute with arithmetic operations.

Generalises to many tasks:

- Evaluate if probabilities are given for each

- Enumerate

- Find the element of in lexicographical order…

Good candidate for representing Boolean functions!

Limits of OBDDs

Orders of variables matters a lot:

Every OBDD computing has size .

FBDD

Same as OBDD but variables may be tested in different order on different path as long as they are tested at most once on every path.

Advantages: more succinct

Drawbacks:

- cannot be minimized canonically, nor applied etc.

- actually, not that powerful: cannot be represented by polynomial size FBDDs.

One Minute to Cool Down

60s

Wrap up:

CNF : are natural, powerful but not tractable

Knowledge Compilation

From CNF to …

Knowledge compilation: amortize the compilation (offline) phase during the query (online) phase

- Source language: CNF (in this talk and in most existing work)

- Target language ???

Target Language

Many choices are possible: OBDD, FBDD, and many many others. Depends on what we want to do.

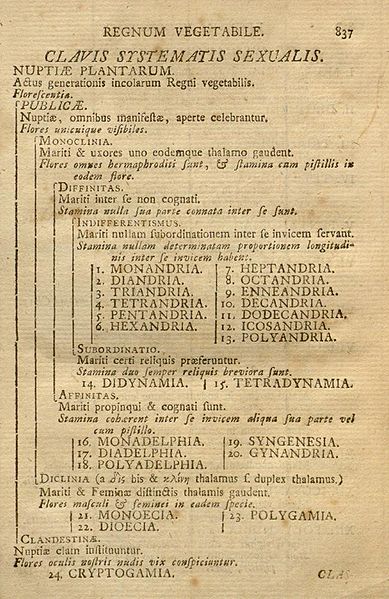

Knowledge Compilation Map [Darwiche, Marquis 2001]

| Notation | Query | Explanation |

|---|---|---|

| CO | Consistency check | Is D satisfiable? |

| VA | Validity check | Is D a tautology? |

| CE | Clause entailment | does D[τ] is sat? |

| SE | Sentential entailment | does D1 ⇒ D2? |

| CT | Model counting | how many solutions has D? |

| ME | Model enumeration | Enumerate the solutions of D. |

| CO | VA | CE | SE | CT | ME | |

|---|---|---|---|---|---|---|

| DNNF | ✓ | × | ✓ | × | × | ✓ |

| d-DNNF | ✓ | ✓ | ✓ | × | ✓ | ✓ |

| dec-DNNF | ✓ | ✓ | ✓ | × | ✓ | ✓ |

| FBDD | ✓ | ✓ | ✓ | × | ✓ | ✓ |

| OBDD | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

A Knowledge Compiler for FBDD

Exhaustive DPLL with Caching based on Shannon Expansion:

This scheme is parameterized by:

- caching policy

- branching heuristics

Exploiting decomposition

For many tasks, such as model counting, it is interesting to detect syntactic decomposable part of the formula, that is:

and

- decDNNF: FBDD + decomposable -gates

- Still allows for model counting via the identity

- Compilers can be adapted to detect this rule.

Existing Tools

- Top-down Model Counter:

CachetSharpSAT

- Top-down Knowledge Compilers:

DSharpD4

- Bottom-up compilers:

SDDc2dCUDDfor manipulating Decision Diagrams.ADDMC

The D4 compiler

D4 is a top-down compiler as shown earlier:

- Use oracle calls to a SAT solver with clause learning to cut branches and speed up later computation

- Use heuristics to decompose the formula so that it

breaks into smaller connected components.

- Nice tools from graph theory

- Interesting research questions around these heuristics

The Power of decomposable -gates

Is it useful to have -gates in practice?

Yes, exponential gain in circuit size on some instances:

There is a family of Boolean functions such that any FBDD computing has size at least but can be computed by a -sized dec-DNNF.

One Minute to Cool Down

60s

Wrap up:

- Many existing Target Languages: chosen depending on the supported queries

- Branch and bound approach for compilation: importance of heuristics

- Many actual tools exist and can be used!

KC as a tool

Data structure used in KC can be used in other areas of computer science to leverage existing results.

KC meets Databases

Relational Databases and queries

Data stored as relations (tables):

| People | Id | Name | City |

|---|---|---|---|

| 1 | Alice | Paris | 2 | Bob | Lens |

| 3 | Carole | Lille | |

| 4 | Djibril | Berlin |

| Capital | City | Country |

|---|---|---|

| Berlin | Germany | |

| Paris | France | |

| Roma | Italy |

Conjunctive queries

SQL is a full fledge language, hard to study.

Large class of queries are expressed by a smaller class: conjunctive queries.

| People | Id | Name | City |

|---|---|---|---|

| 1 | Alice | Paris | 2 | Bob | Lens |

| 3 | Carole | Lille | |

| 4 | Djibril | Berlin |

| Capital | City | Country |

|---|---|---|

| Berlin | Germany | |

| Paris | France | |

| Roma | Italy |

- because

-

because:

- AND

- .

Conjunctive Queries (continued)

Conjunctive queries are queries of the form: where

- is a tuple of variables from

- are relation symbols

Database : list of relations filled with values in domain

Defines a new table where if each part of on variables are in .

CQ correspond to doing JOIN queries in SQL.

Hardness of solving conjunctive queries

Bad new: given a conjunctive query and a database , it is NP-complete to decide whether !

And yet databases systems solve this kind of queries all the time!

- Query is usually small wrt

- Join tables following an optimized query plan

- Leverage clever indexing algorithm

- Use clever heuristics based on statistics gathered earlier

Acyclic queries

Central class of conjunctive queries because of their tractability.

Every CQ is not acyclic

Yannakakis Algorithm

Twisting Yannakakis for Counting

Total of solutions.

Trace of the Yannakakis Algorithm

The trace of Yannakakis Algorithm on acyclic CQ is a decision-DNNF (non Boolean domain) of size linear in the data.

Factorized Databases

Datastructures known as “Factorized Databases”.

For every acyclic query and database , one can build a decision-DNNF computing of size .

Knowledge compilation style approach. One can efficently:

- decide whether

- compute

- enumerate

Unify existing results and push the hardness in the compilation part.

Going further

This compilation results can be used to recover many other results:

- Ranked access: given and some order on , output in time

- Optimization: find the tuple of that maximizes a linear function

- Aggregation over a semi-ring where

Knowledge Compilation meets Optimization

Boolean Optimization Problem

BPO problem:

where is a polynomial.

Observation: may be assumed to be multilinear since over

where

Example

is maximal.

Algebraic Model Counting

Semi ring:

- commutative, associative

- ,

- .

Boolean function and :

AMC Examples

- If on :

- Arctic semi-ring

Allows to encode optimization problems on Boolean functions.

BPO and Boolean Functions

For define: where

encodes !

and on as:

- and

- for .

Encoding BPO as Boolean function: an example

Example:

- , and .

BPO as a Boolean Function

where .

Try using Algebraic Model Counting for BPO:

- compile into, e.g., OBDD

- compute in time .

Rich connection

- Good practical results (e.g. using D4)

- Leverage known tractable classes of CNF to BPO

- Allows for solving more complex optimization problems

Example: solve such that :

How?

- Construct OBDD that computes

- Transform into so that it computes

- Compute

Doggy Bag

Take Home Message

- Original motivation of Knowledge Compilation: reasoning with

knowledge bases

- Renault Example

- Configuration problems in general

- Interesting datastructures to solve many tasks on

Boolean Functions

- Enumeration

- Algebraic Model Counting

- Transfer tractability and tools by encoding

problems into Boolean Functions:

- Databases

- Optimization problems